Love at first beep

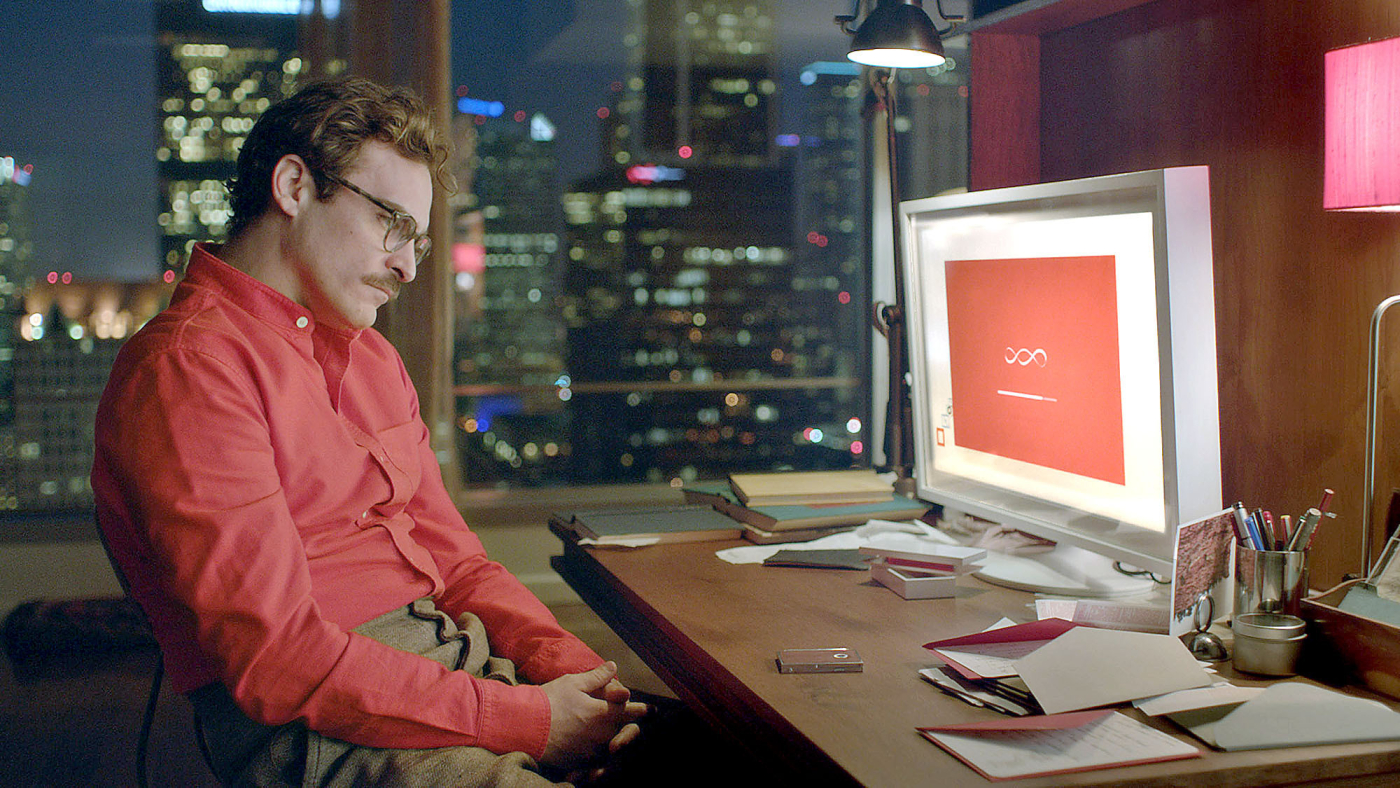

"It has been a long time since I've been with somebody with whom I'm totally at ease with." The movie Her shows a loving relationship between Theodor and the operating system Samantha. Whether it will be possible in the (nearby) future to establish social and emotional relationships with robots is a question according to dr. Zerrin Yumak (Information and Computing Sciences, UU). In order for us to fall in love with them we still need to overcome some obstacles.

Robotics nowadays

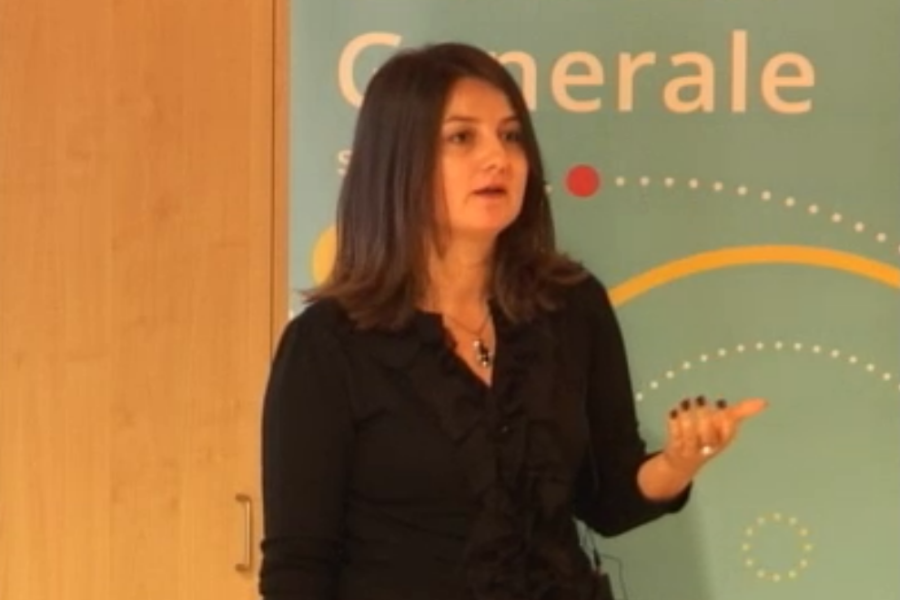

Robots nowadays are being used as companions, assistants and helpers. Yumak explains that this development is part of something bigger. More and more robots are being built to understand and respond to socio-emotional cues to increase our quality of life. Yumak currently leads the project Embodiment and Physical Interaction, developing an interactive virtual character for practicing job interview skills called Eva. Eva is able to make report on users progress, to create interest for the topics that are being discussed and she is able to increase learning outcome by review. Are there prospects of constituting even more complex relationships with robots?

Intelligent social behavior

This question is a tough one, mostly because it is connected to many scientific fields, such as Psychology, Neurosciences and Philosophy. With the help of these disciplines Computer Sciences can also take account of emotions and social behavior in Artificial Intelligence (AI). Robots and virtual characters are now able to detect primary emotions when they interact with human beings. They can tell for instance by your facial expression, physiological reactions and the way in which you use your voice whether you're happy, sad, angry or surprised.

However robots and virtual characters still experience great difficulty in sensing more complex emotions. This has to do with two things. First, there is still a lot of discussion about specific definitions of words such as love, consciousness and engaging. It's all about semantics. Second, a lot of expressions depend heavily on the context. A smile can be interpreted in many different ways, other than just as a sign of utter happiness. It can be meant ironically, showing that someone is very uncomfortable with a current situation or express nothing more than socially accepted behavior when you are standing in line at a grocery store.

What will happen?

To get from low-level representation, such as answering the question at what time the meeting starts, to high level representation consisting of believable behavior, such as laughing when you tell a robot a funny joke, forms the biggest challenge for AI. Required capabilities of socially intelligent machines are the need to express and perceive emotions, use natural ways of communication in speech and facial expression. And the need to use certain gestures. Moreover, they ought to exhibit distinctive personalities and learn how to recognize others. In this way AI is trying to create behavior that is credible. However, creating these simulations of believable behavior is still work in progress.

So the question remains: are we going to build computers with their own personality, emotions, motivations, interests and love? The answer is maybe as unsatisfying as it is unsettling: we don't know yet. Bottom-line is that we still have a long way to go when it comes to developing genuine relationships with robots and virtual characters. Only when multiple scientific disciplines work together to figure out for instance the ethical implications of interacting with them, will we be able to create robots with believable behavior.

Relations with Robots is the last lecture in the lecture series 'Breaking Beta'. You can watch it here.